Chaitanya Chakka

I work at the intersection of multimodal research and production-grade engineering, building systems that are interpretable, benchmarked, and fast.

About

I build AI systems that work in the real world, from research to production.

Currently exploring multimodal ML and how vision-language models process information.

Shipped systems at scale: 1500+ TPS APIs, real-time viz with millions of points, and containerized ML services.

Journey

My path through education and industry.

Data Scientist Intern

Prospect33 • New York, NY

- Built low-latency WebGL/React visualization rendering up to 8M+ points (Deck.gl + GPU buffers), sustaining 60 FPS.

- Designed an active-learning, human-in-the-loop labeling loop surfacing top 1% informative points.

- Added GPU-accelerated embeddings (PCA, t-SNE, UMAP, autoencoder) to boost downstream model F1.

MS in Artificial Intelligence

Boston University • Boston, MA

Software Development Engineer

Cashfree Payments • Bangalore, India

- Spearheaded Kong API Gateway integration with Golang wrappers across 30 teams, serving ~1500 TPS daily.

- Shipped custom Lua plugins and deployed on Kubernetes with PostgreSQL backend.

- Introduced Twilio for WhatsApp/SMS routing, improving delivery rates by ~20%.

BS in Computer Science

Birla Institue of Technology and Science, Pilani, Hyderabad Campus • Hyderabad, India

Publications

Research on multimodal learning, vision-language models, and NLP

Some Modalities Are More Equal Than Others: Decoding and Architecting Multimodal Integration in MLLMs

- Built MMA-Bench: controlled audio–video–text semantic misalignment benchmark.

- Interpretability pipeline combining black-box tests + white-box attention statistics.

- LoRA finetuning improved modality-specific accuracy by 20–40%.

Social Implications of OCEAN Personality: An Automated BERT-Based Approach

- This paper presents an automated approach that uses BERT and psycholinguistic features to accurately predict the Big Five (OCEAN) personality traits from textual data by combining multiple datasets.

- It also empirically investigates how social factors like age, gender, profession, and zodiac sign relate to personality variations.

Projects

Featured builds. Full list includes experiments and long-tail work.

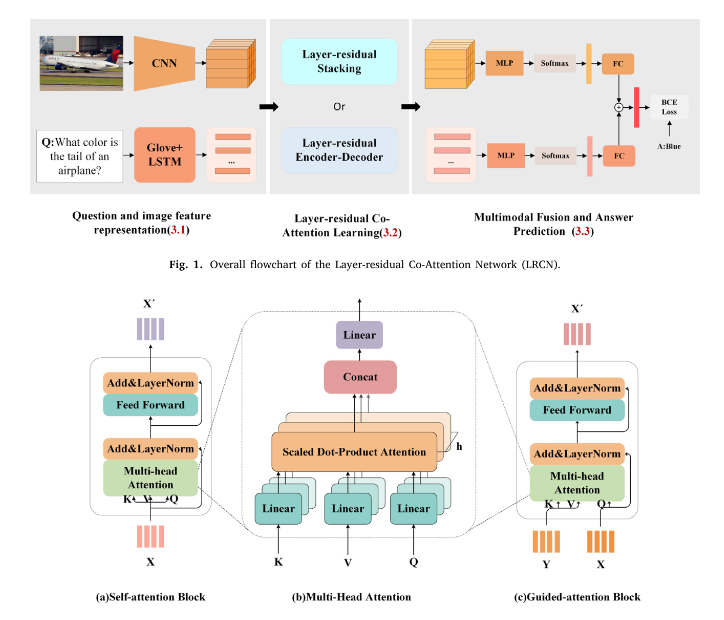

Built a multimodal VQA system combining ResNet-152 visual features with GloVe+LSTM text encoding. Implemented layer-wise residual co-attention mechanism achieving ~60% accuracy on VQAv2 benchmark.

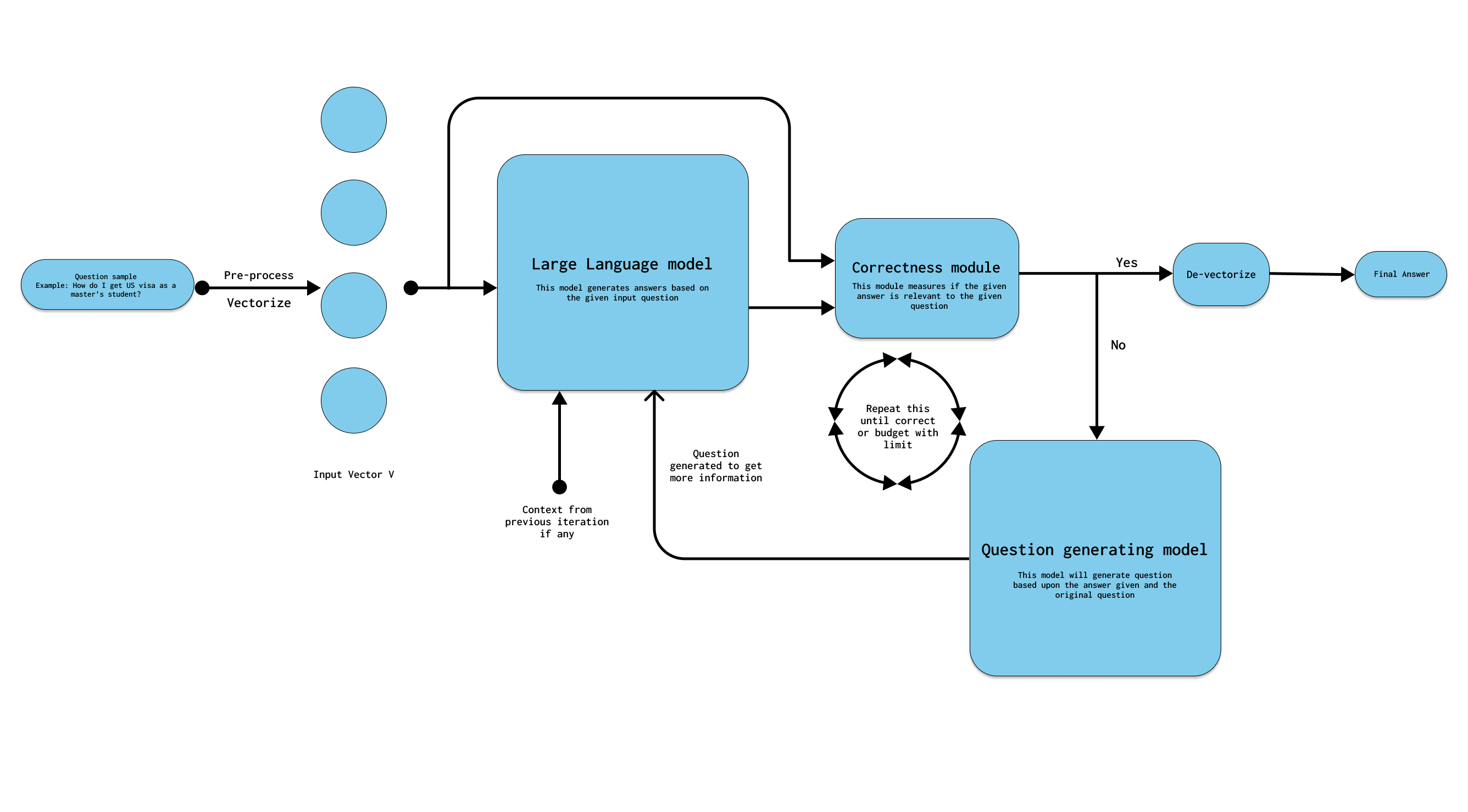

Developed a 3-module pipeline for generating contextual follow-up questions with iterative correctness checks. Fine-tuned on 26k immigration QA pairs, improving ROUGE scores over baseline.

Skills & Technologies

Tools and technologies I use to build AI systems

Languages

ML Frameworks

Infrastructure

ML & GenAI

Vision & Multimodal

Let's Connect

Always open to discussing multimodal research, ML systems, or interesting collaboration opportunities.

📍 Boston, MA